With advances in artificial intelligence (AI), its capacity to understand and react to human behavior is attaining unprecedented levels of sophistication. Underlying this improvement is multimodal AI, a next-generation technology that allows machines to process and comprehend inputs from various sources of information—text, audio, images, and video—all at once. Multimodality is unveiling increasingly natural, context-sensitive, and intelligent AI systems. As companies work on enhancing customer experience, simplifying intricate tasks, and making better decisions, the multimodal AI market is rising.

Understanding Multimodal AI

Multimodal AI systems are made to replicate human knowledge through combining different kinds of data inputs. For instance, a voice assistant that can read voice commands, recognize facial expressions, and read visual context is utilizing multimodal ability. As opposed to single-mode AI, which handles a single mode of data at once (e.g., text alone or images alone), multimodal AI is handling multiple streams of data to maximize accuracy and contextual applicability.

This makes it especially useful in situations where there is a need for subtle communication or decision-making. Ranging from medical diagnosis and customer service to self-driving cars and content moderation, being able to converge disparate data inputs is transforming how AI is being applied.

Driving Factors Behind Market Growth

The need for more natural and human-like AI engagement is one of the prime motivations for the multimodal AI industry. Consumers are increasingly demanding seamless experiences from their digital platforms—a smart speaker that can recognize gestures and voice, or a customer support chatbot that can sense emotional signals through tone and wording. Multimodal AI is essential to fulfilling those demands.

In addition, the spread of data in a variety of formats—images, text, words, and videos—requires algorithms that can make sense of them in an holistic manner. Since digital content increasingly is complex, unstructured, and multimodal, multimodal AI provides an effective means for extracting meaning and actionable information out of this plethora of data.

One of the key factors is the increasing ease of access to high-end computing facilities and large pre-trained models. Transformer architectures and foundation models such as GPT and CLIP have opened up the doors to resilient multimodal applications, and developing them has become more scalable and accessible to companies across industries.

Market Segmentation

By Component

· Solution

· Service

By Organization Size

· SMEs

· Large Enterprises

By Data Type

· Audio and Video

· Image

· Text

By End Use

· Automotive and Transportation

· BFSI

· E-commerce and Retail

· Healthcare

· IT and Telecom

· Media and Entertainment

Key Players

· Aimesoft Inc

· Alphabet Inc

· Amazon Web Services Inc

· IBM Corporation

· Jina AI GmbH

· Meta Platforms Inc

· Microsoft Corporation

· OpenAI LLC

· Twelve Labs Inc

· Uniphore Technologies Inc

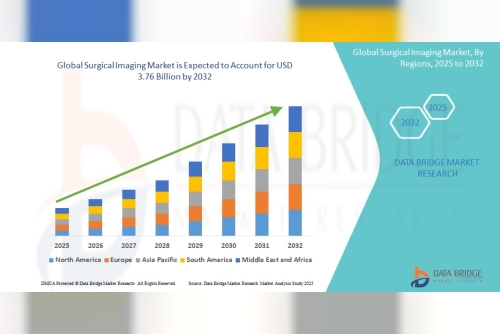

Geography

· North America

· Europe

· Asia-Pacific

· South and Central America

· Middle East and Africa

Challenges in Implementation

Despite its potential, multimodal AI presents several challenges. Integrating different types of data involves complex processing, data alignment, and model training. Ensuring that different data modalities synchronize correctly in real time is technically demanding and requires robust architecture.

Additionally, multimodal systems tend to be data-intensive, and it can be time-consuming to procure labeled data in multiple formats. Privacy and bias issues also compound, as the systems can unknowingly perpetuate stereotypes or misuse sensitive visual and audio information.

Regulatory systems are only lagging behind with these developments, which can be a drag on adoption in areas such as healthcare and finance, where data management is paramount.

https://www.theinsightpartners.com/sample/TIPRE00038959

Conclusion

The multimodal AI market represents a transformative leap in how machines understand and interact with the world. By combining sight, sound, text, and beyond, these systems promise smarter, more human-like AI experiences across industries. As technological capabilities mature and integration challenges are addressed, multimodal AI will become a foundational element in next-generation applications—from virtual assistants to autonomous systems and decision-support tools. In a data- and experience-driven world, multimodal AI is leading the charge in smart innovation.

NSE 7 - FortiSASE 25 Enterprise Administrator NSE7_SSE_AD-25 Dumps

NSE 7 - FortiSASE 25 Enterprise Administrator NSE7_SSE_AD-25 Dumps